Cloud native interest and uptake is growing fast. With that comes the need for networking to keep pace, in particular where performance, scale and service-awareness are concerned.

You have a multitude of Kubernetes CNI plugins to choose from, all providing you with basic pod network connectivity. You could say that Kubernetes pod networking 1.0 is complete.

Figure: K8s pod networking 1.0 evolving towards K8s pod networking 2.0

What is needed for K8s pod networking 2.0?

Make policies and services first-class citizens. In effect, you have K8s policy and service awareness at the network layer.

Performance. Application workloads sensitive to network resource constraints is a reality. This includes applications using any/all data plane features such as ACLs, NAT, IPsec and tunnel encap/decap.

Interface Versatility. Must accommodate your classic kernel interfaces (e.g. tapv2, veth) and newer, performance optimized interfaces such as memif.

Observability. You can never have enough pushed or pulled stats, telemetry, logs and tracing consumed by your management and observability apps. Any solution must include mechanisms providing you with detailed awareness and visibility of network behavior and performance.

API Support. Existing CNI plugins incorporate their own API implementation resulting in potential lock-in dependencies. Open and documented API development tools, plugins and management agents makes it easy for you to build applications and services that leverage a high-performance network data plane. Now you can have your developer community devote cycles to what is really important: applications.

Fast Configuration Programmability. Container setup and teardown is fast and furious. You don’t have time to wait on configuration updates from a central repository. It is faster and more accurate to “watch” a data store for any changes and immediately execute the desired configuration updates.

CNF. Embrace cloud native network functions where appropriate. This means introducing CNFs into a K8s cluster data plane and/or to construct a service chain.

Immutablity. Subscribing to immutable infrastructure best practices enables your host and kernel infrastructure to remain unchanged as you add and delete resources from the network.

Evolvability. Translates to innovation autonomy from the existing environment while not deviating from K8s-based deployment and operations best practices.

It goes without saying that pod networking 2.0 must be 100% Kubernetes compliant, and private/hybrid/public cloud-friendly.

Contiv-VPP is a Kubernetes CNI network plugin designed and built to address pod networking 2.0 requirements. It uses FD.io VPP to provide policy and service-aware data plane functionality consistent with K8s orchestration and lifecycle management.

Contiv-VPP features consist of the following:

Maps K8s policy and service mechanisms such as label selectors to the FD.io/VPP data plane. Policies and services become network-aware enabling your containerized applications to receive optimal transport resources.

Implemented in user space for rapid innovation and immutability.

Programmability enabled by a Ligato-based VPP agent that provides you with APIs for FD.io/VPP data plane configuration.

contiv-netctl for CLI management and control.

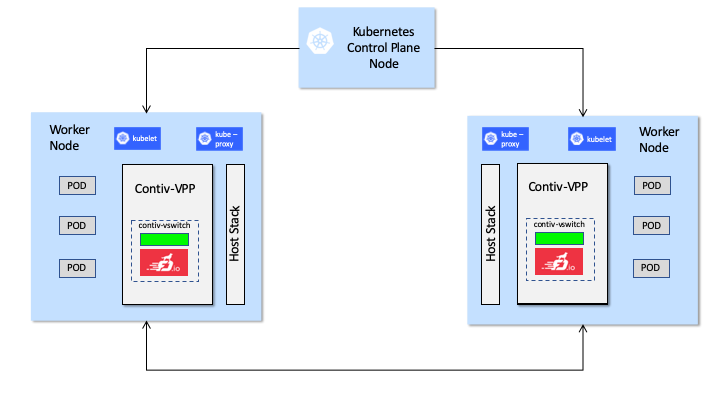

Figure: High-level overview of a cluster running Contiv-VPP

Contiv-VPP is like any other CNI plugin that contains all of the usual “hooks” to bootstrap and manage the network.

At a high level, you have Contiv-VPP components on the control plane node that receive and store K8s policy and service information in an etcd data store. Contiv-VPP node agents listen for updates and program the data plane accordingly. The contiv-vswitch includes the Ligato VPP agent and FD.io/VPP packaged in a single container.

The unique aspects of Contiv-VPP are:

K8s policy and service mapping to the FD.io/VPP configuration. You have an automated distribution pipeline that maps K8s policies and services to FD.io/VPP configuration information programmed into the network.

contiv-vswitch composed of the FD.io/VPP data plane and the Ligato VPP agent. The contiv-vswitch runs in user space and uses DPDK for direct access to the network I/O layer.

IPv6/SRv6 In many scenarios, a K8s deployment requires a network address translation (NAT) component so that client pods can access services identified with an internal clusterIP address. This introduces another level of indirection that adds complexity and impacts scale and performance. Contiv-VPP supports IPv6/SRv6 CNI so you do not require a NAT function in your deployment.